For the most part, people are very trusting when it comes to data. Most don’t hear statistics on the news or see graph visualizations and think, “I bet the data is wrong.” We presume the black box is doing its job right. But…the nerds know. The nerds are these people that are inside that black box looking at the actual data. Nerds would have you know that not only is the data wrong, but it is wrong every time. It’s just a matter of amplitude.

Nerds warning about data quality mostly look like hand waving to the rest of us and we tell ourselves, “the nerds will figure it out.” But there are times when data quality meets a failed human process which turns our gaze to the nerds. With automation dominating everything and data being the basis for that automation, we find ourselves asking the nerds for better data quality. Otherwise, we risk the creation of large-scale automated human collisions with dirty or duplicate data.

Lifecycle of Data

Data has a lifecycle from inception, to processing, to analyzing, then ultimately archiving and deleting. Nerds know that getting data wrong can happen anywhere during that lifecycle. Here are some ways data quality issues can emerge during the data’s lifecycle.

Inception

The inception of data is THE biggest place where data quality issues occur because it is where the most work is done to originate the record. Errors here can be unintentional or intentional. Let's go over some examples.

Unintentional Errors

Unintentional errors aren’t always just fat-fingering during data entry. Entire fields can be filled out incorrectly because a form might be goofy. For example, some novel web developer might have the hair-brained idea that the field for the last name should be before the field for the first name. Users instinctively fill out their first name and then last name which creates a lot of dirty data. Another thing that can cause issues here is that the dynamic sizing of the webpage can incorrectly jumble the form fields when in phone mode. But even if fields are perfectly normal, they can get mistakenly filled out wrong.

There is also the telephone game problem. If the person is not directly filling out the form and is working through a call center agent, there is always an air gap between what the person said and what the agent typed.

Intentional Errors

Organizations get in their own way as well. We’ve seen this a lot in the healthcare industry where data standards like HL7 are simply not followed by the hospital staff. When pressed as to why, staff members complained that there are just too many fields to navigate. The remedy was to use a field intended for something else to enter their data in a pinch. Of course, healthcare isn’t the only place this happens. Sales organizations often have been known to do the same thing. One common practice in sales is to make fields more informational by concatenating multiple values in the field. For example, sales reps often use [Company Name]-[Opportunity] to represent their opportunity titles. This would look like “GE-Finance DW”.

Whether intentional or unintentional, at least a portion of all data quality errors are interface related. Just like having an air gap can create dirty data, a bad interface will force people to improvise in a pinch or misinterpret the intention of the fields.

Because there is so much core manipulation of the data, the inception stage is by far the most variant source of data quality. The range of reasons that data quality issues may occur is enormous. However, the scale of any single error is not always laid at the feet of its inception. This often lies with the processing of the data.

Unending Inception

The inception of data happens over and over for the same person, the same product, and the same organizational assets. The reason this inception happens repetitively is because organizations have multiple systems that have their own touchpoints. For example, a customer might use a website, then use a call center, then open a marketing email, then open a support ticket. Each of these scenarios usually occur in its own application system. So they all have their own created representation of a customer record when data merges together during integration.

Processing

Errors at a large scale can happen here. Once the data is written, logic is used to take that data and make it do things that scale to the organizations' needs; whether it is kicking off a marketing campaign, aggregating, or kicking off a separate process. These errors usually come from faulty logic which defines the data’s processing. Challenges in data processing usually sit in a few different categories:

Decentralized Logic

It sounds good to give users the autonomy to do their own data processing, particularly for downstream analytics. Individual productivity is perceived to be high, and it can be fulfilling to make discoveries in data. The impact on the broader organization however can be very negative. The key issue is the logic that is written to turn data into an asset. When decisions are made in silos about what that logic does, there rarely is somebody checking in on it after the fact. As organizations formulate their culture behind these data assets, they end up adopting this logic at scale. With such logic being written in a decentralized way, there is no one place to collaborate or validate assumptions.

Back before the housing market crash, Intricity team members were involved in a large data assessment done at one of the major insurance providers. The organization had over 140 department heads that each led decentralized data analyst teams. The comment from the client’s own solution architect after interviewing each of the department heads was, “This is death by a billion paper cuts. Throw a grenade into the server room and start over.”

Centralized Logic

Centralized logic would seem to be the solution to the “death by a million paper cuts” problem. However, centralized logic has its own risks. If the organization does not seriously invest in the data assets or take the cheap route when it comes to those writing the logic, they can suffer the same problems at an even bigger scale. Since the data asset is centralized, that means more of an impact on the larger organization in its use and reuse.

However, the advantage of centralized logic is that auditing can happen in a centralized way, and fixes can be also done centrally presuming the architecture is deployed correctly.

Analyzing

Much of processing is for analytics. In some cases, they are one and the same, particularly for decentralized corporate cultures.

Scalable Consumption

One of the problems in analytics is high-scale construction and consumption. Defining how queries are assembled, labeled, and formatted can be highly variable as an organization's data consumption needs scale. Those inconsistencies can create costly confusion.

Storytelling Analytics

Analytics is the point where data is formatted to tell a story, but whether that story represents reality or not is a different matter. Even rigorous scientific papers that pour hours into data draw the wrong or useless conclusions (https://reason.com/2016/08/26/most-scientific-results-are-wrong-or-use/). The presentation of data is often like the presentation of a survey question. How you ask the question really matters. For example, business news networks will do deep zooms on graphs to make them look like there is a dramatic drop in stock prices. The drop could be only a few dollars, but if the axis is zoomed far enough, it will provide the right psychological effect for the glancers.

Forecasting

This might come as a shock to some, but corporate forecasting has nothing to do with analytics or science. Folks who do forecasting usually ask their superiors what result they want and then they proceed to create a convincing model to match those conclusions. If models could forecast the future, we wouldn’t have recessions, depressions, famines, diseases, etc. At human scale, forecasting is nearly impossible. At planetary scale, like the motion of the planets, etc, forecasting actually becomes useful (eg. we can forecast when we should blast off from Earth to land on Mars). Any smaller than that and things just become sketchy. Even something as large as the weather patterns can’t be predicted accurately beyond a handful of days. Therefore, at human scale, forecasts are usually a method of driving behavior rather than a useful prediction of the future.

Archiving and Deleting

Data quality in archiving is usually only a problem if the data is stored in a legacy format that is no longer recognized. This is often the case in healthcare where record storage may have as much as a two-decade lifespan. The legacy formats are often obscure in their storage and query type making the quality of the data suspect. Many hospitals struggle to create a reliable query structure over such sources, often needing to automate the data structure into more modern formats to successfully execute analysis.

Fixing It

There is no fix to data quality. There are just a host of difficult or expensive workarounds. The data quality and master data management (MDM) spaces have been running on a treadmill for the last 20 years. However, at the very least the speed of the treadmill has slowed down enough today that mere mortals can implement pieces of it without dropping a million dollars.

Inception

Unintentional Errors

Much of the unintentional errors at a human level are user interface related. Even the intentional errors are such. The issue is that the interface doesn’t even try to meet the user halfway at the moment of entry. For packaged applications, this is often something the larger vendors do include as part of their solutions. For custom applications, there are some good form practices that can be leveraged: https://www.w3computing.com/systemsanalysis/ensuring-data-quality-input-validation/

There are also several free(ish) tools to define rules in forms to validate data quality: https://www.goodfirms.co/data-entry-software/blog/best-free-open-source-data-entry-software

Unending Inception

This problem is the basis of multi-million dollar spends within corporations. At least a piece of this problem can be solved for a mere $30,000-$50,000 spend. Specifically, the identification of duplicate records.

Today organizations have circumvented the customer MDM problem by shipping all their data to 3rd parties that have extensive customer graphs. These vendors charge a premium to tie the business’s customer data to that graph. All of these 3rd party processors charge a per-record fee for doing this processing. So paying a million dollars a year is not uncommon. These 3rd parties provide a lot of value because they enrich customers’ data with additional attributes that the original data did not have. But why send duplicate records to the enrichment vendor when the cost is calculated on a per-record basis? Somewhere between 20-50% of customer records are duplicates. So right off the top, a big chunk of spend can be reduced.

Truelty

This is where the Truelty solution sought to go after the data quality market. Rather than spin up their own graph, they leveraged the power of Snowflake’s compute to identify duplicates on a 1st party basis. The code generation engine brings the duplicate identification processing to the client’s Snowflake instance rather than the client divulging their customer data to Truelty.

Processing

The solutions for processing have totally exploded in the last five years. The entire ETL market has been put on its ear. Where the dust settles is really hard to tell, even now. There seems to be two camps. One camp leaves the logic in a coding format but wraps it in an object management architecture. The other camp gives the logic a visual flow interface that leverages the power of the database for processing.

Decentralized Logic

Centralization of logic is still a major challenge in the new world of cloud data processing. Just because there are new computation methods doesn’t mean that native tools are as functional as platforms that have been enhanced over the course of 20 years. Things like reusable mapplets, for example, are still something that needs to be built into some platforms, but they will eventually come to be. On the other hand, some of these legacy high-functioning tools are still clutching to their old licensing models by processing via ETL rather than ELT.

DBT

Organizations that decide to keep their logic in the native code format need to be able to manage their code in a way that it doesn’t become bespoke islands of logic. DBT allows organizations to bundle coding patterns into callable components so code reuse is maximized and code maintenance is reduced. Thus the “decentralized logic” problem can be addressed without completely abstracting the code itself.

Matillion

Matillion delivers the vision of logical widgets in a GUI. These widgets translate into native SQL logic which high-scale cloud databases can process. The visual experience is on par with ETL tools organizations may be already used to leveraging.

Analyzing

The quality fixes for some of the challenges in analytics are solutions that have been around for a long time. However many modern vendors providing these solutions have already been gobbled up by the bigger cloud providers.

Scalable Consumption

The key issue in scalable consumption is the management of business metadata in the analytical layer. The best an organization can do at scale is to centralize the query objects and its formatting to get the most governable results. These create query objects that allow end users to assemble analytics without having to write its own SQL code. A well-governed metadata layer can act as a centralized hub for content moderation to ensure that vetted building blocks for analytics are being provided to end users.

Storytelling Analytics

There isn’t much that can be done about storytelling. If somebody has an agenda to promote, they will do so. Having said that, there are modern tools for ensuring that the underlying objects in content can be traced. This enables organizations to validate the most commonly used data elements and who the experts are within an organization on certain topics.

Looker

Looker has been gobbled up by Google. Ten years ago, they resurrected a technology that had been heavily used by Business Objects, Cognos, and Microstrategy to centralize analytical metadata in a model that could be used across an endless number of reports and dashboards. The metadata was designed in a markup language called LookML. What separated Looker from the legacy BI vendors was that they were born in the cloud. Their rise in popularity was meteoric as it allowed organizations to deliver both governance and cloud for analytics.

Alation

Alation is an organization that was founded 10 years ago to solve the challenge of governing data objects via a data catalog. What immediately separated Alation from others was the automation of gathering statistics about queries. At the time, data catalogs were simply giant repositories of manually documented descriptions about data. The common issue was that nobody would participate. Alation met people halfway by automatically gathering query statistics on data elements being used.

A Nerd's Day in the Sun

As organizations retune their processes against their cloud warehouses, the nerds are getting a say in the architecture. The question is how far will they go in letting the organization know how dire the data quality problem is. As the great Thomas Sowell says, “There are no solutions. There are only trade-offs.” So is the case with data quality issues. The market solutions aren’t catch-all solutions, rather they are the best trade-off. The “solutions” of old that required a king's ransom failed in their promise of a world devoid of data quality problems, but they laid claim to their riches. The solutions of today are more scrappy in addressing data quality, but they do circumvent the "king's ransom" part. Combining these scrappy solutions together makes for a loosely-coupled approach to dealing with the complex data quality problem.

Who is Intricity?

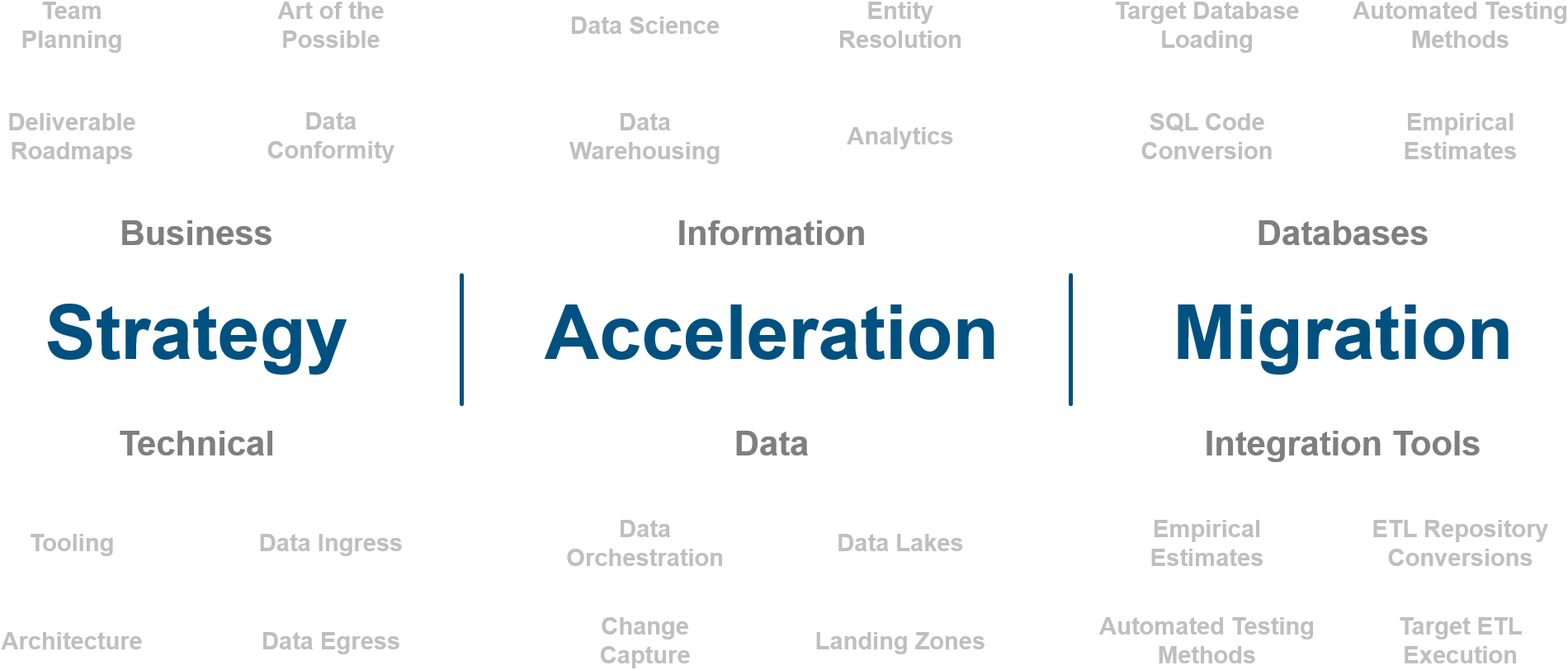

Intricity is a specialized selection of over 100 Data Management Professionals, with offices located across the USA and Headquarters in New York City. Our team of experts has implemented in a variety of Industries including, Healthcare, Insurance, Manufacturing, Financial Services, Media, Pharmaceutical, Retail, and others. Intricity is uniquely positioned as a partner to the business that deeply understands what makes the data tick. This joint knowledge and acumen has positioned Intricity to beat out its Big 4 competitors time and time again. Intricity’s area of expertise spans the entirety of the information lifecycle. This means when you’re problem involves data; Intricity will be a trusted partner. Intricity's services cover a broad range of data-to-information engineering needs:

What Makes Intricity Different?

While Intricity conducts highly intricate and complex data management projects, Intricity is first a foremost a Business User Centric consulting company. Our internal slogan is to Simplify Complexity. This means that we take complex data management challenges and not only make them understandable to the business but also make them easier to operate. Intricity does this through using tools and techniques that are familiar to business people but adapted for IT content.

Thought Leadership

Intricity authors a highly sought after Data Management Video Series targeted towards Business Stakeholders at https://www.intricity.com/videos. These videos are used in universities across the world. Here is a small set of universities leveraging Intricity’s videos as a teaching tool:

Talk With a Specialist

If you would like to talk with an Intricity Specialist about your particular scenario, don’t hesitate to reach out to us. You can write us an email: specialist@intricity.com

(C) 2023 by Intricity, LLC

This content is the sole property of Intricity LLC. No reproduction can be made without Intricity's explicit consent.

Intricity, LLC. 244 Fifth Avenue Suite 2026 New York, NY 10001

Phone: 212.461.1100 • Fax: 212.461.1110 • Website: www.intricity.com