Liquidity in the financial services industry represents turning investments into cash. There are, however, investments that have serious barriers to becoming cash. Take for example a commercial skyscraper in Manhattan. The number of buyers that can afford skyscrapers is not high so the ability to liquidate an asset like that takes serious time and money.

I want to borrow this term to talk about the logical assets that your organization has embarked on. To name a few, these are assets like merge statements, mappings, stored procedures, data transformation jobs, and the litany of calculations your organization has. All these assets are in place to take data and turn it into something usable for downstream applications and decision makers. However, if those assets have been in use for many years, they’re likely sitting on old on premise solution architectures. So how does an organization divest itself from these old architectures in favor of more agile cloud solutions when the code is built for a legacy platform? Or in other words, how can we solve the code liquidity problem?

In the early years of using cloud as a data center, the sales pitch was an attempt to convince corporations to ditch their private data center leases into renting AWS’s data center. This was doable, but not really a game changing money saver as some people initially thought. The real revelation on how cloud could change the world was in using it as a pay-as-you-go compute service. After all, this is what the cloud was supposed to address in the first place. But this is where the code liquidity problem peaks its head in. The code that was designed for static on-premise systems just doesn’t work with a multi-tenant pay-as-you-go compute model. Data warehousing solutions, like Snowflake, that took advantage of this ability to provision compute on demand became market leaders seemingly overnight. They were outpacing their competition in ways that many couldn’t even dream of, simply because the very foundations of the legacy environments were suddenly so comparatively weak. However, as organizations sought to move their old data processing environments to the Snowflake they were suddenly hit with a realization that the old code couldn’t come as is. 2019 was the marquis year for this realization, as automation solutions seemed to emerge out of the woodwork, some robust, some vaporware. In either case, the oversimplification of code conversion was often a little too overstated.

Over the Wall

2 months ago I was called by an organization that had embarked on a Snowflake conversion from Teradata. The contract provided an over-the-wall conversion of code. Essentially requiring the client and their consulting team to make up any differences... Wow, talk about a train wreck. In all your exploration for code conversion options, DO NOT treat it as an over the wall (one time automation) event. Being able to convert your code is something that requires iteration in order to contextually construct the bridging necessary to the target language. Here’s the problem, each technology has capabilities that other technologies don’t, so symatic conversion is never going to be enough. This doesn’t mean that development in the target technology is impossible, it just means that the approach has to deal with the gap. Additionally, atomic semantics are easy to convert, but contextually difficult to pull off in the real world. Let me give you a funny example. When I was in college my roommate walked in laughing at a story his Economics teacher had told him. The teacher was raised outside of the US with English as a distant second language. As a student in a US University, He had to rapidly learn English. While going through a US cafeteria lunch line, he saw they were serving chicken breasts. He queued the serving lady, “Could I get two boobs please?”

2 months ago I was called by an organization that had embarked on a Snowflake conversion from Teradata. The contract provided an over-the-wall conversion of code. Essentially requiring the client and their consulting team to make up any differences... Wow, talk about a train wreck. In all your exploration for code conversion options, DO NOT treat it as an over the wall (one time automation) event. Being able to convert your code is something that requires iteration in order to contextually construct the bridging necessary to the target language. Here’s the problem, each technology has capabilities that other technologies don’t, so symatic conversion is never going to be enough. This doesn’t mean that development in the target technology is impossible, it just means that the approach has to deal with the gap. Additionally, atomic semantics are easy to convert, but contextually difficult to pull off in the real world. Let me give you a funny example. When I was in college my roommate walked in laughing at a story his Economics teacher had told him. The teacher was raised outside of the US with English as a distant second language. As a student in a US University, He had to rapidly learn English. While going through a US cafeteria lunch line, he saw they were serving chicken breasts. He queued the serving lady, “Could I get two boobs please?”

“Excuse me?” as she made her way over to the serving trays.

“Two boobs please.”

By the book, a chicken boob and a chicken breast are the same thing. However, the data you get when you “run the code” is laughably wrong. The same is true during an actual code conversion project. You can’t just conduct a semantic conversion and expect magic on the other end. The converted code will be the first stage of a multi stage adaptation to the naming conventions, coding patterns, functional gap workarounds, and other errors that will occur during a code conversion project. So the automation you employ needs to be accessible during the code conversion project. This will ensure that if you find patterns or methods of converting that sit outside the predetermined semantics/grammar of the automation, those methods can be added and used in the automation during the project. This is also why you want somebody that really understands how data ticks during a conversion.

How Code Conversion Works

First let me say that the biggest misconception about code conversion tools is that they are some kind of magic button. They’re simply not that. The most complete conversion tools we’ve encountered are designed to accompany a conversion project by providing the core tooling to automate code conversion as a process. Here are some of the core features that code conversion tools have.

Grouping Patterns

If we were to do a manual code conversion of, say a Data Warehouse, then you could expect the team to convert one subject matter at a time. This approach appeals to the human need to see an end to end process done. The problem with this approach is that the code and its reuse is encountered in many locations across multiple subject matters. For example, imagine you have some function logic that is reused in 200 locations across the entire deployment. The goal of the tooling is not to identify single scenarios where that piece of logic exists, but rather allow for logic to be converted in batch. Thus coding patterns begin to become a very important aspect of converting code. The ability to group and tag patterns is something that leading code conversion tools enable.

The Converter

The pre-configured conversion that ships with the conversion tool is an important part of the conversion process. It should capture the semantic conversion but also be able to address some of the functional gaps. If a conversion has never been done from a particular source to a particular target, then it's reasonable to expect that there will be some real hands on customization about how patterns are designed to convert. This is probably a good point to delve into the guts of how these conversion tools work. The leading conversion tools are architected to loosely couple the source and target languages by creating an agnostic code layer. Otherwise the vendors of these tools wouldn’t be able to adapt their solutions. This means there are code readers, code writers, and an agnostic code layer in between.

This is why the telltale of a good converter is a legacy of code generation. In other words, does the vendor have a solution for the use case of generating code from scratch? If they do, then there’s a good chance they are built on a solid foundation.

Adaptation

Remember when we talked about code conversion not being a magic button? Well, adaptation is where the rubber meets the road, and it's the reason why code conversion isn’t an over the wall event. The converted code is going to either pass or fail to compile, or match data in the target environment. This is where adaptation of the conversion patterns may be necessary. For example, we recently did a conversion where we needed to create stored procedures in Snowflake. At the time this functionality didn’t even exist in Snowflake so we coded a pattern in the code conversion tool so that when the legacy stored procedure patterns triggered, it would convert them to a python script which could simulate a stored procedure. This wasn’t something at the time which the conversion tool supported so we built that new code pattern during the engagement.  Another example was during a Teradata conversion, as we went to take the solution into production the enterprise scheduling team required a large number of code flags to be inserted into the deployment. Had we been doing this by hand, the change would have added 4 months of work. Instead we were able to make the updates in about 3 weeks and a 4th to do validation. Thus you can see the importance of having access to the conversion tooling during the project as it’s nearly impossible to account for every gremlin you’re going to encounter.

Another example was during a Teradata conversion, as we went to take the solution into production the enterprise scheduling team required a large number of code flags to be inserted into the deployment. Had we been doing this by hand, the change would have added 4 months of work. Instead we were able to make the updates in about 3 weeks and a 4th to do validation. Thus you can see the importance of having access to the conversion tooling during the project as it’s nearly impossible to account for every gremlin you’re going to encounter.

Testing, Testing, and More Testing

You can go overboard on testing. Just consider for a moment that you’re taking an environment that may have taken a decade to create and you’re now expecting to test all of it. So don’t be surprised if the testing is larger than the code conversion effort. There are a few levels of testing. There’s a compile test, which represents the most basic level, and doesn’t really represent a complete test. For example, you can comment out code and it will pass a compile test, that doesn’t mean the code works. This is where we get into data testing, and really where the costs can spiral out of control. The core issue here is that to complete a data test, there needs to be test cases written and data provided for each test case. This isn’t something that a consulting company can easily create, since they didn’t likely create the legacy system. Thus it means the client must engage in creating a requirement to do unit level data testing. This can often be just a time and money black hole. So some organizations opt to take it one level above the unit level patterns and focus on the subject matter testing once all the sub patterns of code have been converted. Yet another level above, you have parallel testing. And this is really the easiest way to test as it provides differences between the existing production environment and the future environment being converted to. This is typically done using hash scripts which continuously test source and target environments for differences. What's nice about this approach is that it provides time for all the triggers to fire which the production environment might have without requiring an analyst to spell out all those before/after test cases. The downside is that the environments have to sit in parallel for a reasonable duration to ensure that all the errors have been shaken out. At any rate, testing is not an SI only initiative, so you should plan on the testing being as large if not larger than the actual code conversion.

Promotion to Production

Large organizations have a code promotion process that often requires multiple steps before it can be considered ready for production. For example there needs to be regression testing to ensure that the environment can stand up to production failure edge cases. Often these are contingent on the tools being leveraged and nuanced for each client. Often the organization’s own Production Deployment teams will have their own checklists for regression testing.

In addition to regression testing it’s important to be thinking about your enterprise scheduling tools. Often those tools themselves have code that needs to be injected into the target platform, this is one case where having a conversion tool makes all the difference.

Avoiding Liquidity Issues in the Future

Ultimately the core problem is that code has always been tightly coupled with the runtime engine. After all the runtime of an ETL tool or a SQL database will only understand code that is native. So organizations keep dealing with vendor change after vendor change through giant conversion projects. It reminds me of the hilarious video titled “It’s NOT about the nail.”

To this day, there are integration vendors that tout their 95% customer retention, but those in the know are precisely aware why that retention rate is so high. The effort of exiting the chosen solution is so high that it's nearly impossible to justify the expense.

Few people know much about the code generation tools that basically wave this problem away, but that’s because it’s a very nascent technology. The ability to specify coding patterns and have those patterns expressed as native runtime code in the runtime engine of your choosing is pretty exciting. Imagine having the code for your desired runtime getting auto generated on the fly. Truly then, vendors would compete on features and no longer on the illiquidity of the implemented code.

Who is Intricity?

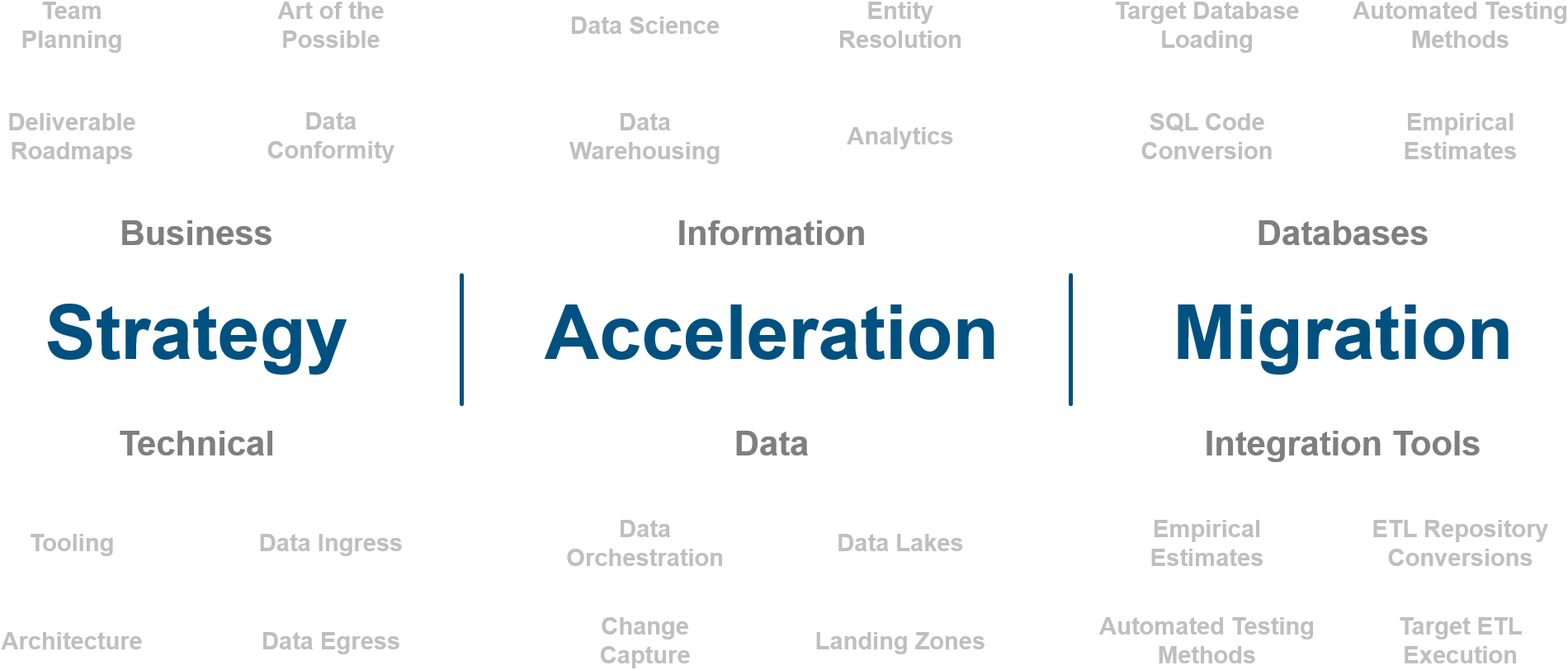

Intricity is a specialized selection of over 100 Data Management Professionals, with offices located across the USA and Headquarters in New York City. Our team of experts has implemented in a variety of Industries including, Healthcare, Insurance, Manufacturing, Financial Services, Media, Pharmaceutical, Retail, and others. Intricity is uniquely positioned as a partner to the business that deeply understands what makes the data tick. This joint knowledge and acumen has positioned Intricity to beat out its Big 4 competitors time and time again. Intricity’s area of expertise spans the entirety of the information lifecycle. This means when you’re problem involves data; Intricity will be a trusted partner. Intricity's services cover a broad range of data-to-information engineering needs:

What Makes Intricity Different?

While Intricity conducts highly intricate and complex data management projects, Intricity is first a foremost a Business User Centric consulting company. Our internal slogan is to Simplify Complexity. This means that we take complex data management challenges and not only make them understandable to the business but also make them easier to operate. Intricity does this through using tools and techniques that are familiar to business people but adapted for IT content.

Thought Leadership

Intricity authors a highly sought after Data Management Video Series targeted towards Business Stakeholders at https://www.intricity.com/videos. These videos are used in universities across the world. Here is a small set of universities leveraging Intricity’s videos as a teaching tool:

Talk With a Specialist

If you would like to talk with an Intricity Specialist about your particular scenario, don’t hesitate to reach out to us. You can write us an email: specialist@intricity.com

(C) 2023 by Intricity, LLC

This content is the sole property of Intricity LLC. No reproduction can be made without Intricity's explicit consent.

Intricity, LLC. 244 Fifth Avenue Suite 2026 New York, NY 10001

Phone: 212.461.1100 • Fax: 212.461.1110 • Website: www.intricity.com