![]()

10 years ago if you were a database developer SQL was the one language that you needed to know. It was the backbone of countless applications, providing a stable bridge between structured data and business intelligence. Any serious foray into the data realm required fluency in SQL's syntax, its powerful capabilities, and nuanced intricacies.

However, as the digital landscape evolved, so did the tools and languages associated with data management and analysis. Python, a high-level programming language celebrated for its readability and versatility, began its ascent as a dominant force in the world of data science and analytics. With an extensive array of libraries like Pandas, NumPy, and SQLAlchemy, Python has not only made data manipulation and analysis easier but has also challenged the traditional boundaries between application development and data processing.

But beyond the technical capabilities, the cultural and behavioral differences between SQL and Python developers also present a fascinating study. As part of our exploration, we will investigate the characteristic behaviors, mindset, and practices that define developers from each camp. The insights gained from this investigation can serve as valuable guidance for teams, hiring managers, and educators in understanding the mindset and ethos of these two communities.

Making Python Data Friendly

In the domain of programming languages, Python had already established itself as a versatile tool, hailed for its intuitive syntax, vast standard library, and a community-driven ecosystem. However, when it came to the world of data, there was a gap that needed to be addressed. That's where Pandas stepped in, fundamentally altering Python's position in the data landscape.

DataFrame - A Powerful Data Structure

At the heart of Pandas is the DataFrame, a two-dimensional, size-mutable, and heterogeneously-typed tabular data structure that offers labeled axes (rows and columns). This resembles the spreadsheets that many analysts and statisticians are familiar with. The introduction of DataFrames provided a straightforward, memory-efficient way to organize and manipulate structured data, making tasks that were once cumbersome in raw Python suddenly seamless.

Data Import/Export Flexibility

Pandas offers a plethora of functions to read from and write to various data formats, from CSV, Excel, SQL databases, to more complex file types like Parquet and Feather. This made Python, with Pandas, a universal tool for data extraction and transformation. Data professionals no longer needed to juggle multiple tools for different file types.

Robust Handling of Missing Data

Data in the real world is often messy. Prior to Pandas, handling missing data in Python was a convoluted process. Pandas introduced structures and functions that treated null values as first-class citizens, offering multiple strategies to identify, manipulate, or interpolate missing data points.

Integrated Time Series Functionality

Time series analysis is a staple in many data disciplines. Pandas brought about specialized functions and structures, like the DatetimeIndex, which made time series manipulations, aggregations, and transformations intuitive and efficient.

Chainable Operations

Pandas made data transformations more readable and concise through its ability to chain operations. This has not only improved code readability but also allowed for more interactive and exploratory data analysis.

Alignment with Popular Data Manipulation Paradigms

Pandas integrated seamlessly with SQL-like operations (group by, join, merge), making it easier for professionals from an RDBMS background to transition into Python-centric data manipulation.

Integration with Other Python Data Libraries

Pandas doesn't exist in isolation. Its tight integration with other Python libraries, such as NumPy for numerical operations, Matplotlib and Seaborn for visualization, and Scikit-learn for machine learning, positioned Python as a holistic data science environment.

Data Science with Python/Pandas

The rise of data science as a discipline has seen professionals wrestling with complex, multi-step analytical processes. Each phase of analysis, from data cleaning to transformation and modeling, builds on the previous, creating an interconnected chain of insights. The Pandas DataFrame has revolutionized this workflow, offering data scientists a dynamic platform where they can iteratively build and refine their analyses, mirroring the philosophical idea of "standing on the shoulders of giants."

Before the advent of DataFrames, data scientists often had to jump between various tools or environments as their analysis grew in complexity. With DataFrames, the entire analytical journey, from raw data import to final visualization or model, can be conducted within the same Python environment, and more importantly, within the same data structure. This ensures continuity, minimizes context switching, and allows scientists to quickly iterate.

The interactive nature of environments like Jupyter Notebooks combined with the responsiveness of DataFrames creates a feedback loop where data scientists can immediately see the results of their transformations. Thus, they can then make informed decisions on subsequent steps, optimizing their analyses in real-time. On top of this, by treating each DataFrame operation as a building block, data scientists can establish modular workflows. Once a particular transformation or analytical step is validated, it can be reused, much like how scientists historically have built upon the validated findings of their predecessors.

Data Analytics with SQL

In the ever-evolving realm of technology, where new languages and tools emerge with rapid frequency, SQL (Structured Query Language) stands as a testament to the power of simplicity, clarity, and robustness. Created in the early 1970s, SQL has navigated more than half a century of technological shifts, maintaining its pivotal role in the world of data. Despite numerous endeavors to replace it or propose alternatives, SQL's throne as the premier query language remains largely uncontested. Let's delve into the reasons for this enduring dominance.

Unparalleled Simplicity

SQL's syntax is both expressive and intuitive. Constructed on the principles of the English language, even complex queries can be read and understood linearly, almost like sentences. This has made SQL accessible not just to software engineers but also to data analysts, business professionals, and those new to the world of databases. Its declarative nature – where users specify what they want, not how to get it – abstracts away the complexities, allowing focus on the data problem at hand.

Universal Applicability

SQL has been embraced by nearly every relational database system in existence. Whether you're using MySQL, PostgreSQL, Oracle, or Microsoft SQL Server, the core syntax and principles remain consistent. This universality means that learning SQL provides access to a vast ecosystem of database technologies.

Performance Optimizations

Under the hood, SQL-based systems have undergone decades of optimization. Database engines are exceptionally adept at interpreting SQL queries, optimizing them, and rapidly retrieving results, even from massive datasets. These performance benefits are hard to replicate in newer, alternative querying languages.

Rich Ecosystem and Integration

Because of its long-standing history and widespread adoption, a plethora of tools, extensions, and integrations have been developed around SQL. Whether it's reporting tools, data visualization platforms, or ETL processes, SQL's presence is ubiquitous.

Attempts at Replacement and Their Shortcomings

Over the years, various languages and frameworks have been proposed as "SQL killers." Whether it's domain-specific query languages or newer paradigms associated with NoSQL databases, many have promised more flexibility or scalability. However, while some of these have found niche applications, none have matched SQL's combination of simplicity, expressiveness, and power. Often, these alternatives come with steeper learning curves or lack the comprehensive tooling and support that surrounds SQL.

All in One Query with SQL

In the intricate dance of data retrieval and manipulation, SQL developers exhibit a unique choreography. Unlike developers in other languages who might employ iterative or multi-step processes, SQL developers often lean towards a holistic approach. They aim to capture the essence of their data requirements in a singular, cohesive query. This approach is not just a matter of preference; it's grounded in the mechanics and strengths of relational database systems.

SQL developers, given the nature of the language, are trained to think about data retrieval and manipulation in terms of sets. Instead of piecing data bit by bit, the ideal is to describe the entire dataset—or transformation—in one comprehensive swoop. This mindset is instilled early on, where the power of SQL shines brightest when its queries are both concise and encompassing.

Leveraging the Power of Database Optimizers

One of the pivotal components of any modern RDBMS (Relational Database Management System) is its query optimizer. When presented with a query, the optimizer's job is to determine the most efficient way to execute it. It evaluates numerous factors, such as available indexes, table statistics, and the current system workload, to devise an optimal execution plan.

The intricacies of these optimizers are better harnessed when they're given the "full picture" in a single query. Fragmenting a data request into multiple smaller queries can inadvertently bypass the optimizer's capability to streamline the entire data retrieval process. In essence, a comprehensive query allows the optimizer to better gauge the intersections, unions, and transformations required and subsequently streamline operations.

Fetching data from a database, especially in large quantities or across networks, can be time-consuming. By consolidating their requirements into a single query, SQL developers reduce the number of round trips between the application and the database. This not only trims latency but also reduces the overall load on both the database server and the network.

Consistency and Atomicity

SQL's roots in the principles of ACID (Atomicity, Consistency, Isolation, Durability) transactions mean that operations are treated as indivisible units. Capturing data needs in one query ensures that the entire operation either completes successfully or fails entirely, providing a consistency guarantee. This is especially crucial in scenarios involving data modifications where piecemeal changes can lead to data inconsistencies.

Convergence of Query Languages

The rise of cloud-based database solutions has transformed the landscape of data storage and retrieval, ushering in an era of unprecedented flexibility. A striking feature of this transformation has been the broadening array of query language options offered by cloud vendors. No longer is there a strict delineation between SQL-centric databases and those accommodating other languages or paradigms. Instead, we're witnessing a convergence of cultures as SQL and Python (among other languages) find themselves meeting in the middle. This merger of philosophies is redefining traditional dogmas associated with querying and data analysis.

Re-evaluation of 'Best Practices'

With multiple query languages at their disposal, data professionals are prompted to reconsider traditional best practices. While SQL developers might lean towards single, all-encompassing queries, the integration of Python nudges them towards a more iterative, exploratory approach for queries requiring highly complex statistics. Conversely, Python developers, with SQL's set-based logic now readily available, can adopt more holistic data retrieval and manipulation patterns that better marry up to query optimizers.

Rules of Thumb

When determining whether to employ SQL or Python for a particular task, consider the nature of the expected output:

- For deterministic tasks where consistency and repeatability are paramount, SQL should be your first consideration.

- For probabilistic tasks where there's an element of randomness or uncertainty, Python's versatile ecosystem might be better suited.

SQL’s Math

SQL, at its core, is deeply rooted in relational algebra—a subset of algebra dedicated to set theory and operations on sets. This foundation imparts to SQL its powerful capabilities, especially when it comes to aggregating and transforming data. Transactional data, which often comprises myriad records representing business activities, finds a natural ally in SQL, primarily due to this algebraic underpinning. Let's explore why the algebraic nature of SQL is especially conducive for aggregating transactions.

Transactional data inherently belongs to the realm of sets. Each transaction can be viewed as an element of a larger set, representing all transactions over a given period or of a specific type. SQL's operations—like SELECT, JOIN, UNION, and INTERSECT—are direct implementations of set theory operations. They allow for efficient categorization, filtering, and combination of transactional records, making the aggregation process intuitive and systematic.

When we think of aggregating transactions, we're often looking at algebraic operations like summation, averaging, or counting. SQL's aggregation functions, such as SUM, AVG, COUNT, and others, are direct manifestations of these algebraic operations. They allow for straightforward computation on sets (or subsets) of transactional data. Given a table of sales transactions, for instance, calculating the total sales for a particular product or the average sale per region becomes a concise and clear SQL query, mirroring algebraic expressions.

Algebra, being a branch of mathematics, is deterministic in nature. An algebraic expression will yield the same result whenever evaluated, given the same inputs. SQL, by virtue of its algebraic foundation, embodies this determinism. When aggregating transactions—especially for critical business metrics like revenue, profit, or inventory counts—this consistency is paramount. Organizations can trust that their aggregated figures, derived using SQL, are both accurate and reproducible.

Beyond simple aggregations, sometimes transactional data requires more complex transformations. These might include filtering transactions based on certain criteria, merging multiple transaction sources, or creating derived metrics. SQL's relational algebraic constructs, such as JOINs, GROUP BY clauses, and conditional statements, facilitate these transformations methodically. Each SQL operation can be visualized as an algebraic transformation, ensuring that data remains structured and coherent throughout the aggregation process.

One of the reasons for this harmonious union stems from Python's and Pandas' inherent leanings toward probabilistic approaches. Let's delve into why this probabilistic inclination positions Python and Pandas as prime choices for data science endeavors.

Python/Panda’s Math

The realm of data science often grapples with uncertainty. Whether predicting stock market fluctuations, analyzing customer behavior, or gauging the efficacy of a new medical treatment, data scientists routinely encounter variability and randomness.

Central to many data science tasks is the concept of sampling—a probabilistic method where a subset of data is taken as representative of the whole. With Pandas, sampling from large datasets becomes a trivial task. Further, Python's rich ecosystem provides tools for hypothesis testing, enabling data scientists to make probabilistic inferences about populations based on these samples.

Python is home to renowned machine learning libraries like scikit-learn, TensorFlow, and PyTorch. These tools often employ probabilistic models, such as Bayesian networks, Gaussian processes, or logistic regression. Pandas DataFrames serve as the foundation for these models, where data cleaning, transformation, and feature engineering tasks are efficiently handled. One of the popular probabilistic methods in data science is the Monte Carlo simulation, used to estimate numerical results by random sampling. Python, given its computational abilities and integration with libraries like NumPy, makes implementing Monte Carlo simulations straightforward. And with Pandas, aggregating and analyzing the results of these simulations becomes a seamless exercise.

Many real-world phenomena, from stock prices to weather patterns, can be represented as time series, which often exhibit probabilistic behaviors. Pandas is renowned for its robust time series analysis capabilities, and when combined with Python's probabilistic modeling tools, it provides a comprehensive platform for analyzing and predicting stochastic processes.

Don’t Make the Rule of Thumb a Religion

In the evolving landscape of data processing and analysis, both SQL and Python have showcased their respective strengths, often linked to deterministic and probabilistic outputs. However, it's crucial to understand that the capabilities of these tools are not rigidly confined to these classifications. Indeed, with enough ingenuity, one could stretch SQL to tackle probabilistic challenges, just as one could mold Python to address deterministic tasks. But there's an inherent trade-off in doing so.

When pushing SQL into the probabilistic realm, the queries can become convoluted and difficult to maintain. The inherent set-based structure of SQL, while immensely powerful for structured data operations, may not be the most intuitive medium for tasks requiring random sampling, stochastic simulations, or complex probabilistic models. The resulting SQL code can be intricate, challenging to debug, and perhaps less efficient than a more naturally-suited tool.

Conversely, using Python for strictly deterministic tasks—especially those that are more naturally expressed using set operations in SQL—can sometimes result in an inflated compute footprint. The iterative nature of Python, while versatile, can be more resource-intensive than the set-based operations of SQL, especially when handling large volumes of data. This might lead to longer execution times and increased costs, particularly in cloud-based environments where compute resources are metered.

The overarching lesson here is not about the limitations of these tools, but rather the importance of pragmatism in choosing the right tool for the job. Just as a skilled craftsman selects the most suitable tool for each task, so should data professionals be judicious in matching their challenges with the most appropriate solutions. By respecting the inherent strengths of each tool and understanding their trade-offs, we can achieve more efficient, maintainable, and effective outcomes.

In summary, while both SQL and Python are incredibly versatile and powerful in their own rights, recognizing and aligning with their natural strengths ensures a smoother and more optimized data journey. Balancing idealism with pragmatism, in this context, is not just a philosophical stance but a pathway to excellence in the data realm.

Who is Intricity?

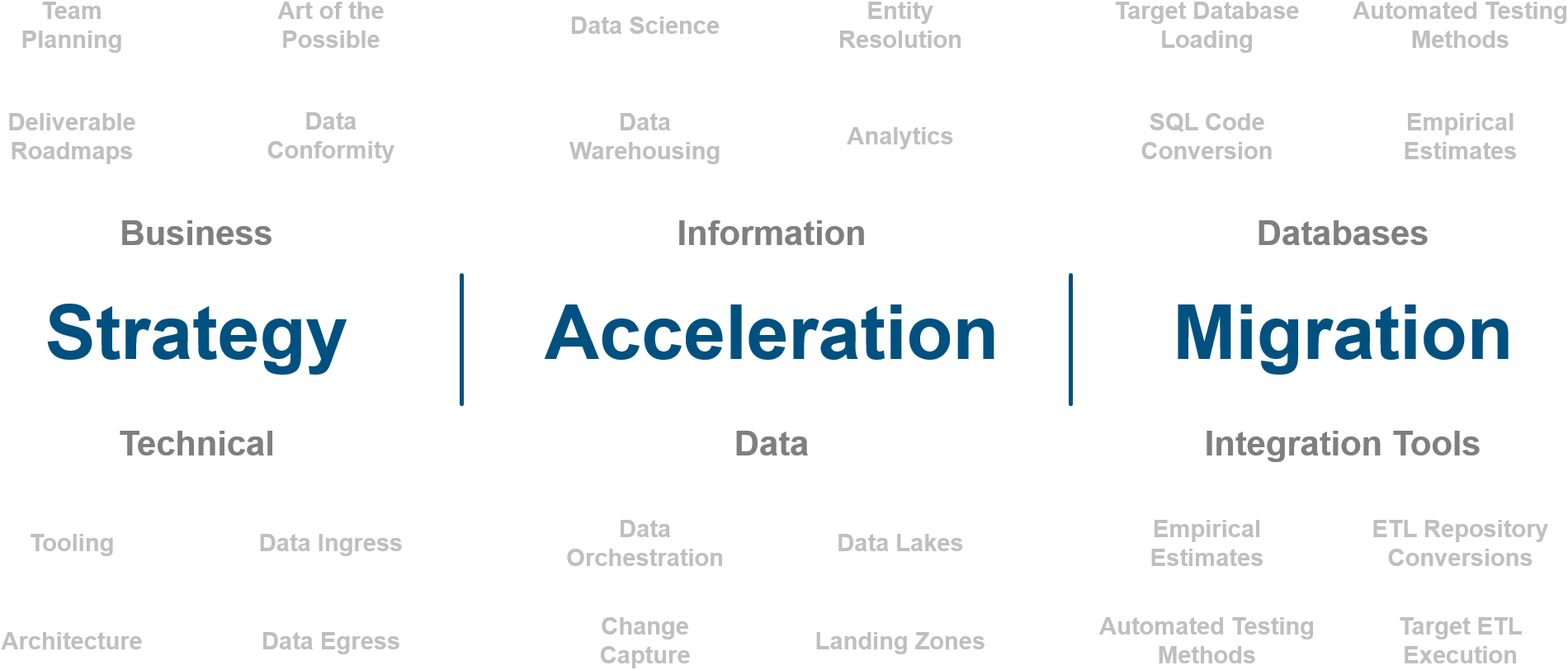

Intricity is a specialized selection of over 100 Data Management Professionals, with offices located across the USA and Headquarters in New York City. Our team of experts has implemented in a variety of Industries including, Healthcare, Insurance, Manufacturing, Financial Services, Media, Pharmaceutical, Retail, and others. Intricity is uniquely positioned as a partner to the business that deeply understands what makes the data tick. This joint knowledge and acumen has positioned Intricity to beat out its Big 4 competitors time and time again. Intricity’s area of expertise spans the entirety of the information lifecycle. This means when you’re problem involves data; Intricity will be a trusted partner. Intricity's services cover a broad range of data-to-information engineering needs:

What Makes Intricity Different?

While Intricity conducts highly intricate and complex data management projects, Intricity is first a foremost a Business User Centric consulting company. Our internal slogan is to Simplify Complexity. This means that we take complex data management challenges and not only make them understandable to the business but also make them easier to operate. Intricity does this through using tools and techniques that are familiar to business people but adapted for IT content.

Thought Leadership

Intricity authors a highly sought after Data Management Video Series targeted towards Business Stakeholders at https://www.intricity.com/videos. These videos are used in universities across the world. Here is a small set of universities leveraging Intricity’s videos as a teaching tool:

Talk With a Specialist

If you would like to talk with an Intricity Specialist about your particular scenario, don’t hesitate to reach out to us. You can write us an email: specialist@intricity.com

(C) 2023 by Intricity, LLC

This content is the sole property of Intricity LLC. No reproduction can be made without Intricity's explicit consent.

Intricity, LLC. 244 Fifth Avenue Suite 2026 New York, NY 10001

Phone: 212.461.1100 • Fax: 212.461.1110 • Website: www.intricity.com

Sample@gmail.com appears invalid. We can send you a quick validation email to confirm it's yours