Is Snowflake an ETL tool?

Written by Jared Hillam

Traditionally ETL tools have been used since the 90s. ETL, which stands for Extract, Transform, Load, is a process that has been fundamental in the world of data integration and data warehousing. But without coming out and saying it, Snowflake appears to be taking over their ETL space.

OK just to get the semantics right, it's really ELT in the modern world. Specifically ETL, where data is transformed in flight while it's being extracted, and loaded, is something Snowflake will likely never do. But the real meat of complexity and processing is the Transform step. Everything else is kitten play. And it appears that Snowflake has the underpinnings to just… take it over.

Snowflake, at its core, is a cloud data platform. It provides an ecosystem for storing, processing, and analyzing vast amounts of structured and semi-structured data. Most customers consider it as their analytical database, but those that are actually touching the back end, know it much more than that. The micro-partition architecture made data manipulation so fast, and the cloning capabilities made dev ops tasks so easy, that it was a no brainer to do the heaving lifting in Snowflake.

This isn’t new, for many years, databases have been used for large scale data manipulation events. But this meant that the organization was left with a cryptic code-based lineage for refining data. Traditional organizations preferred the simplicity of widgets that showed this lineage graphically from source to target.

Indeed this made the transformation of the data very navigable, and it was easy to sell ETL to business leaders that were less inclined to be coders. But this didn’t mean it performed faster. Query optimizers in databases had matured so much they were faster than the ETL tool at manipulating data by around 2005.

Recent foray

The recent innovation for Snowflake is the Dynamic Table. You can think of this as a table with a rule (written in SQL) and a trigger event for the rule which could be nothing more than a wait time. What developers are doing with this is chaining the dynamic tables together to create a data pipeline. Basically anything you can write in SQL can be chained together with rules, making the data pipeline very simple to execute. Additionally, when the pipeline is created it derives a visual DAG of the dynamic table nodes.

No, you can’t click-and-drill into them or build from the widget interface, but in terms of showing a business person what is happening, it bridges some of that gap. Now combine this with all the other features in Snowflake, and you start to see the value of using Snowflake as the Transformation layer.

Extract and load

The Extract and Load of data into Snowflake from the source, is something that sits outside of Snowflake current domain of technology. Tools such as Fivetran and Qlik Replicate do a fine job of addressing this data replication task. Once this data is onboarded, Snowflake can pick up the data from there and facilitate the transformations necessary to elevate the data from its raw form to more consumable information… BUT, Snowflake isn’t a magic button. This all has to be coded by skilled developers.

In summary

Is Snowflake an ETL tool? In the strictest sense, no. Can it replace an ETL tool? Well… lets just say it can replace the hard part of what an ETL tool does, which is the Transformation. For Extraction and Loading, you will still need some additional tooling.

Who is Intricity?

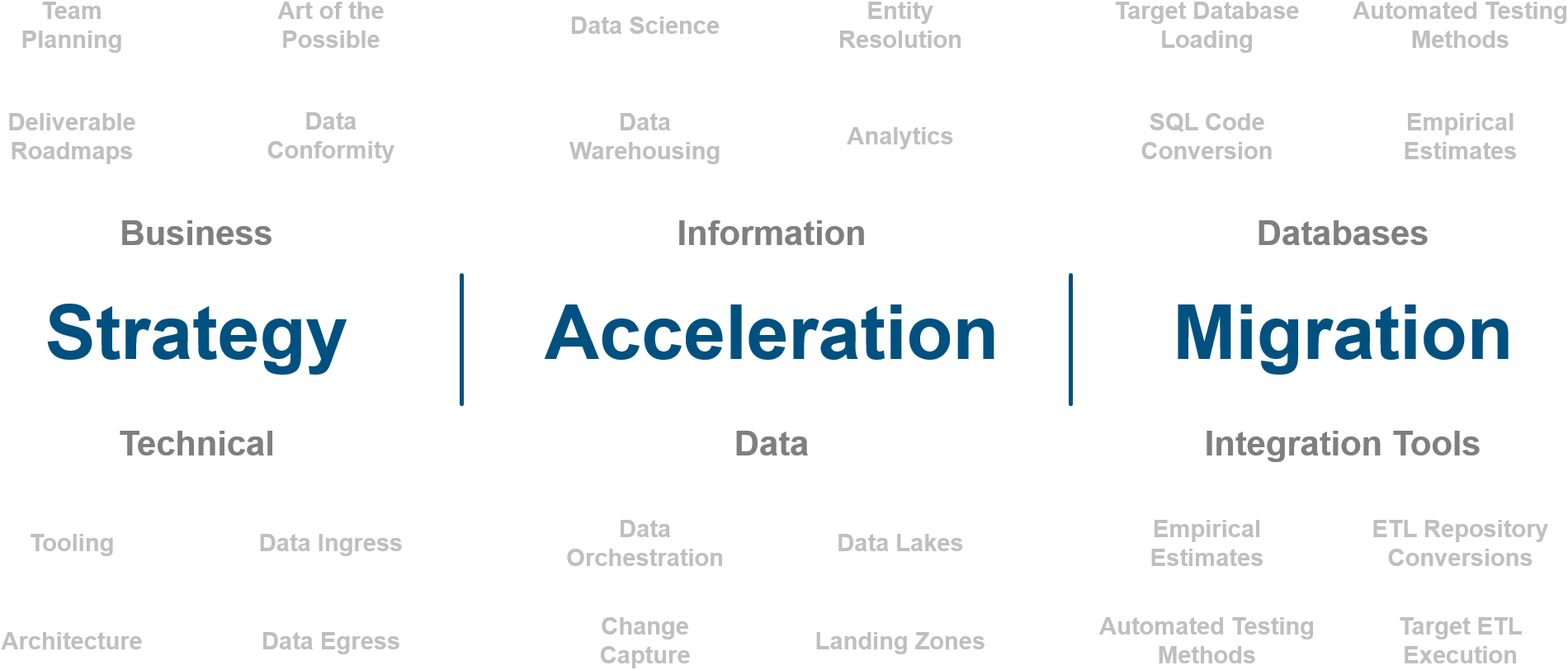

Intricity is a specialized selection of over 100 Data Management Professionals, with offices located across the USA and Headquarters in New York City. Our team of experts has implemented in a variety of Industries including, Healthcare, Insurance, Manufacturing, Financial Services, Media, Pharmaceutical, Retail, and others. Intricity is uniquely positioned as a partner to the business that deeply understands what makes the data tick. This joint knowledge and acumen has positioned Intricity to beat out its Big 4 competitors time and time again. Intricity’s area of expertise spans the entirety of the information lifecycle. This means when you’re problem involves data; Intricity will be a trusted partner. Intricity's services cover a broad range of data-to-information engineering needs:

What Makes Intricity Different?

While Intricity conducts highly intricate and complex data management projects, Intricity is first a foremost a Business User Centric consulting company. Our internal slogan is to Simplify Complexity. This means that we take complex data management challenges and not only make them understandable to the business but also make them easier to operate. Intricity does this through using tools and techniques that are familiar to business people but adapted for IT content.

Thought Leadership

Intricity authors a highly sought after Data Management Video Series targeted towards Business Stakeholders at https://www.intricity.com/videos. These videos are used in universities across the world. Here is a small set of universities leveraging Intricity’s videos as a teaching tool:

Talk With a Specialist

If you would like to talk with an Intricity Specialist about your particular scenario, don’t hesitate to reach out to us. You can write us an email: specialist@intricity.com

(C) 2023 by Intricity, LLC

This content is the sole property of Intricity LLC. No reproduction can be made without Intricity's explicit consent.

Intricity, LLC. 244 Fifth Avenue Suite 2026 New York, NY 10001

Phone: 212.461.1100 • Fax: 212.461.1110 • Website: www.intricity.com

Sample@gmail.com appears invalid. We can send you a quick validation email to confirm it's yours