I’m convinced that out of every IT discipline, the world of data has the most buzzwords. I’ve always wondered why this was. I’m still not sure I know the answer, but I believe it’s because true innovations in data are so difficult to come by. So when something even remotely interesting emerges, it almost always needs a new word to try and get money to move. The latest buzzword being evangelized is the Data Lakehouse. Queue the holographic interface…

So what is a Data Lakehouse? In a sentence, it’s a single data backbone for data lake and data warehousing logic and activities. Basically, it’s the architecture we spoke about in a series which we called, The Next Generation Architecture, which you can find here.

Remotely Interesting

The ability to centralize all the organization's data is the "remotely interesting" part. Legacy Hadoop aficionados would say centralization capacity has been around forever, but the truth is that never really was the case. The limitations of hardware and the software of the past made the prospect of centralization so slow and challenging to engineer that it was essentially impossible. But now, we no longer are dealing with endless meetings to solve the engineering complexities around scale. Stepping back, consider how much of our day was consumed by scale in the past. Planning hardware, scaling for user communities, scaling for competing queries, scaling for data loads, query performance, data loading speeds, onboarding more data, and on and on. Many of those conversations that required multiple meetings and executive sponsorships are now just a slack message and a few menu options. Today, if we're dealing with issues related to scale, it's truly the extremes. So it's only recently that the ability to centralize has even been a thing because the capacity to scale is a thing.

Now don't think that just because a new buzzword has been coined, the dream of complete centralization is easy. That dream is even a challenge today as the sheer complexity of conforming that much data and marrying people-to-information assets remain as the existing hurdles. However, at least the solutions around these problems ARE obtainable, and they're obtainable in a single data platform.

Now don't think that just because a new buzzword has been coined, the dream of complete centralization is easy. That dream is even a challenge today as the sheer complexity of conforming that much data and marrying people-to-information assets remain as the existing hurdles. However, at least the solutions around these problems ARE obtainable, and they're obtainable in a single data platform.

Here's the meat and potatoes that need to be understood by decision-makers: The logic for how data comes together must live somewhere and it must take some form. The decision of where it lives, when it is executed, and what form it takes, is ultimately what you pay solution architects the big bucks for (we do have a video on this topic, which you can find here). But today that logic can live in a single location and that's what's different. Ultimately what you will be setting up in your organization is an automation process for curating data from low structure and conformity to high structure and conformity. This will be done so that it can be shared with a graduating number of users and roles as it transitions.

Trade-Offs

“There are no solutions. There are only trade-offs.”

“There are no solutions. There are only trade-offs.”

― Thomas Sowell

Having a single platform to handle your graduated data-to-information pipeline has big advantages. But can you think of what you might be trading off? The trade-off might be totally worth the centralization, in fact, it probably is, but recognize that there will be something traded. The more centralization you do, the more tightly coupled your solution stack will become. So the opportunity to independently upgrade your solution stack down the road diminishes. Having a loosely coupled architecture has often been the hallmark of good data management for this reason (we have a video that speaks to loose coupling here). If all the aspects of a solution architecture live under a single umbrella, organizations begin to tightly couple themselves to a single vendor, which gives the vendor a lot of power over pricing, slowed innovation, and degraded support to monetize your lock in. This is just a future risk meaning there's no guarantee something like this WILL happen, but it is familiar behavior as vendors reach maturity. Loose coupling made the upgrading process less painful as the organization didn't have all it's eggs in one basket. However, the trade off to loose coupling is persistent friction between solutions, so as Tom Sowell said, "There are only trade-offs."

Here's the good news: the ability to convert code from one solution to another has never been easier. Over the last 3 years, solutions have popped up for converting code between platforms. Intricity uses BladeBridge for this purpose today which makes the trade-off of centralization a less painful risk. However, this is where discipline in your code really makes a difference. The more standardized your development efforts are, the cheaper a future code migration will be. This is because the operation of code conversion tooling is built to take advantage of patterns in your code. So the more pattern-oriented your code is, the more agile any future code conversion will be. This is where Intricity recommends leveraging code generation tooling.

Ultimate 1UP

So the next time somebody brags about their Data Lakehouse with a twinkle in their eye, maybe you can suggest that you're implementing a Data Lakeoperationsciencesharehouse and put them in their place...

Who is Intricity?

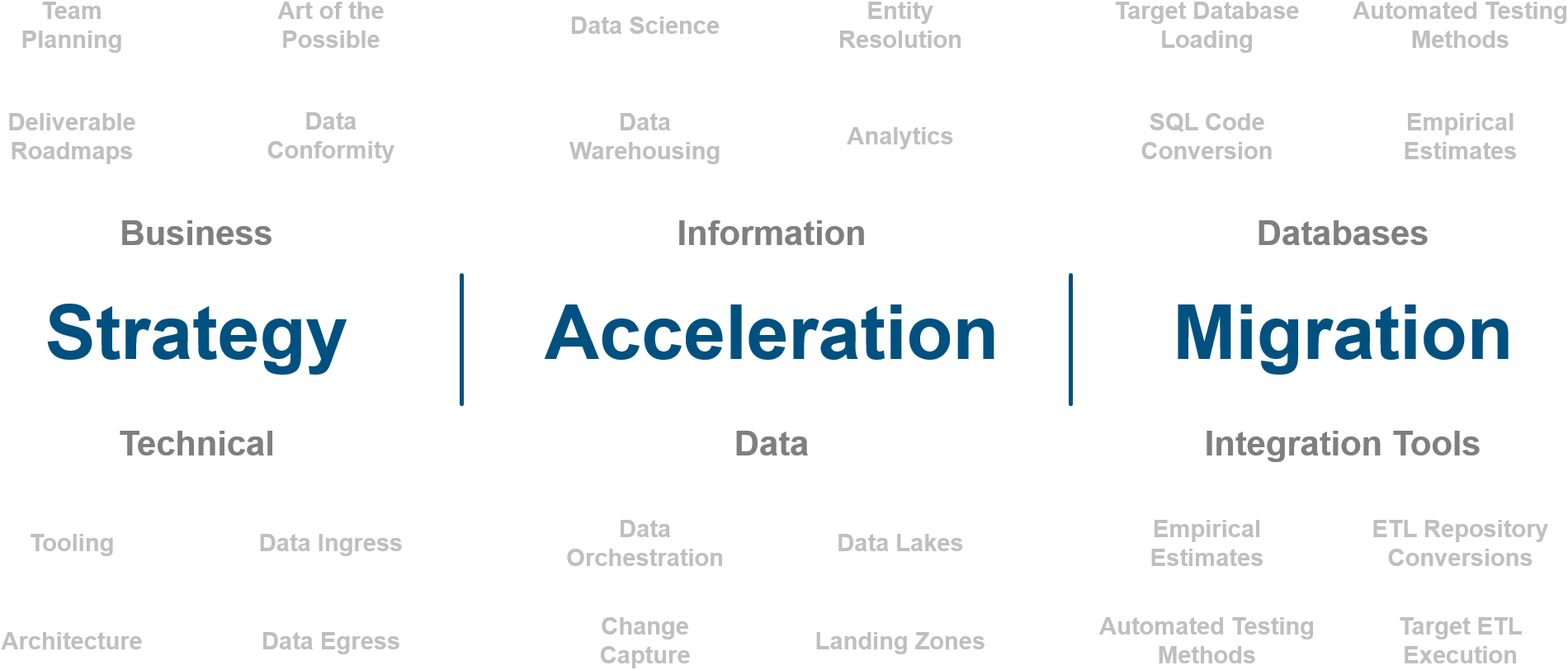

Intricity is a specialized selection of over 100 Data Management Professionals, with offices located across the USA and Headquarters in New York City. Our team of experts has implemented in a variety of Industries including, Healthcare, Insurance, Manufacturing, Financial Services, Media, Pharmaceutical, Retail, and others. Intricity is uniquely positioned as a partner to the business that deeply understands what makes the data tick. This joint knowledge and acumen has positioned Intricity to beat out its Big 4 competitors time and time again. Intricity’s area of expertise spans the entirety of the information lifecycle. This means when you’re problem involves data; Intricity will be a trusted partner. Intricity's services cover a broad range of data-to-information engineering needs:

What Makes Intricity Different?

While Intricity conducts highly intricate and complex data management projects, Intricity is first a foremost a Business User Centric consulting company. Our internal slogan is to Simplify Complexity. This means that we take complex data management challenges and not only make them understandable to the business but also make them easier to operate. Intricity does this through using tools and techniques that are familiar to business people but adapted for IT content.

Thought Leadership

Intricity authors a highly sought after Data Management Video Series targeted towards Business Stakeholders at https://www.intricity.com/videos. These videos are used in universities across the world. Here is a small set of universities leveraging Intricity’s videos as a teaching tool:

Talk With a Specialist

If you would like to talk with an Intricity Specialist about your particular scenario, don’t hesitate to reach out to us. You can write us an email: specialist@intricity.com

(C) 2023 by Intricity, LLC

This content is the sole property of Intricity LLC. No reproduction can be made without Intricity's explicit consent.

Intricity, LLC. 244 Fifth Avenue Suite 2026 New York, NY 10001

Phone: 212.461.1100 • Fax: 212.461.1110 • Website: www.intricity.com