About Client

The Client is a global not-for-profit organization focused on helping young girls maximize their potential through STEM, life skills, and entrepreneurship. Their program memberships measure in the tens of millions and they are one of the most recognizable brands in the world.

Challenge

With membership in the many tens of millions, their organization was challenged with managing so many unique identities. Over time, many iterations of the same member would flow in, sometimes with different addresses, typos, married names, etc. The challenge was how to narrow this enormous pool of members into unique member IDs. Obtaining these unique IDs would more accurately portray the membership participation and a large portion of downstream analytics.

Member data spanned entire lifetimes, as members would join as children and continue all the way up as alumni and volunteers in their late 80s. This breadth of membership meant that effective tracking of attributes needed extensive nuance, as to which algorithmic methods get attached to the identification of unique members.

Navigating Constraints

With such massive member rolls, traditional solutions priced themselves with a very high barrier to entry. The organization needed a viable way of managing unique records without costing a small fortune. The organization had 8 primary sources of data which all had different touchpoints with their membership. Each touchpoint contained a version of the member records with varying levels of accuracy. Additionally, some of these systems had their own APIs for acquisition.

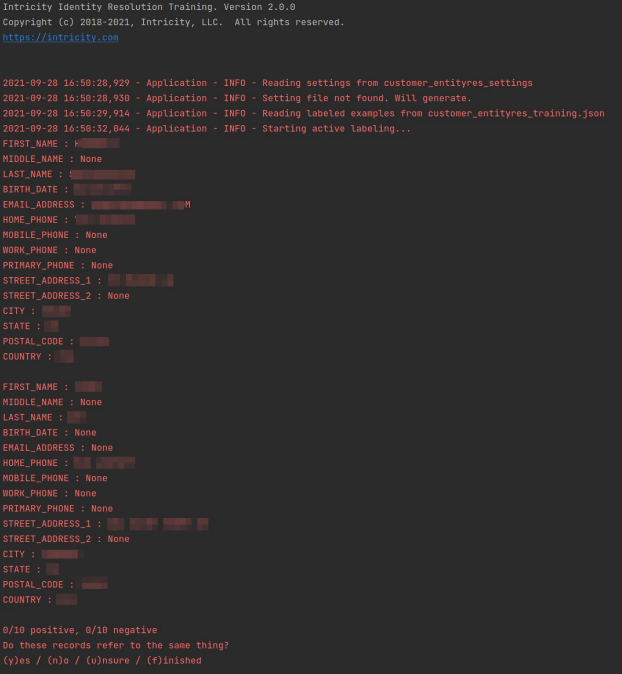

Training machine learning models on identity resolution for a specific organization’s data required a much more efficient method of training. This is because the quantities of data, although voluminous, are still not enough to effectively train a neuro network from scratch.

String comparisons for Identity Resolution requires computation of

number of records * (number of records -1)/2

The challenge of doing this many comparisons is evident when you consider how many records an organization might have. So if an organization has 40 million records, we're talking about 800 trillion comparisons.

Win 1:

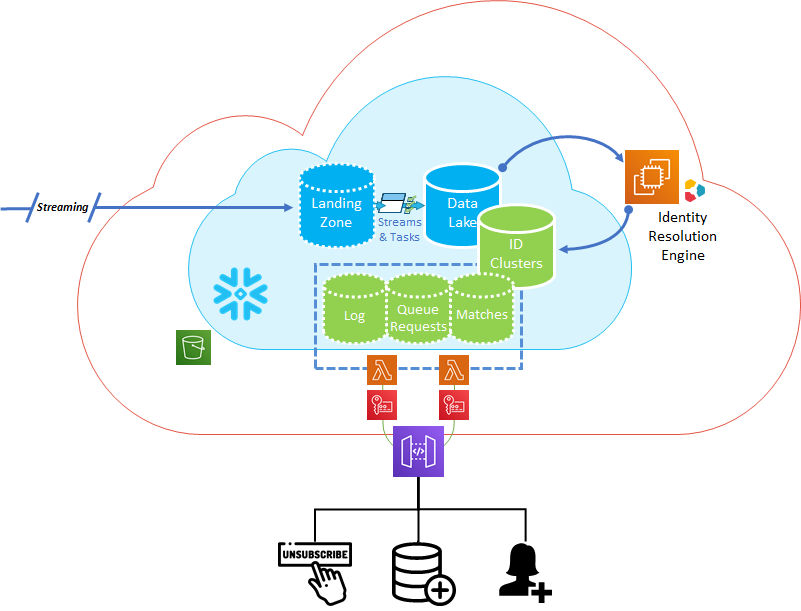

Intricity worked jointly with the client to append their existing Snowflake deployment with additional sources of data. This allowed all of the data to reside in an effective platform for scalable compute. This also effectively centralized the customer domain so it could be calculated for unique values.

Win 2:

To optimize the efficiency Intricity created a standardized cleansing step. This eliminated noise from the string comparisons which the Identity Management solution would be doing in later steps.

Win 3:

Intricity implemented its Identity Management powered by Snowflake. The solution is a supervised regression fit system which allowed the client team members to compare presented matches and indicate which records were not matches. The presentation of potential matches and the human "teaching" of what should be excluded, trains the Identity Management solution. The solution in turn automatically allocates 30 different string comparison algorithms (predicates) that produced the best match to the data set.

This allows for the nuance of appropriate string comparison algorithms to be applied. What is powerful about this method is that the training can occur on smaller data footprints vs the truly enormous data footprint and compute requirements found in Deep Learning.

Win 4:

Intricity created canopies and groups which enabled the nearly 800 trillion comparisons to be broken down into more logical sets. These represent segments that are likely to contain matches, like the same country. This breaks down the membership records into sets that are a lot more computable at scale without brute-forcing the comparisons from scratch.

Win 5:

The customer gained a Unique Members data set which they can flow all their new systems into for consolidation. The membership records can be used for custom mailers, segmentation, alumni donation tracking, onboarding applications, and many other internal activities related to their critical membership.

Who is Intricity?

Intricity is a specialized selection of over 100 Data Management Professionals, with offices located across the USA and Headquarters in New York City. Our team of experts has implemented in a variety of Industries including, Healthcare, Insurance, Manufacturing, Financial Services, Media, Pharmaceutical, Retail, and others. Intricity is uniquely positioned as a partner to the business that deeply understands what makes the data tick. This joint knowledge and acumen has positioned Intricity to beat out its Big 4 competitors time and time again. Intricity’s area of expertise spans the entirety of the information lifecycle. This means when you’re problem involves data; Intricity will be a trusted partner. Intricity's services cover a broad range of data-to-information engineering needs:

What Makes Intricity Different?

While Intricity conducts highly intricate and complex data management projects, Intricity is first a foremost a Business User Centric consulting company. Our internal slogan is to Simplify Complexity. This means that we take complex data management challenges and not only make them understandable to the business but also make them easier to operate. Intricity does this through using tools and techniques that are familiar to business people but adapted for IT content.

Thought Leadership

Intricity authors a highly sought after Data Management Video Series targeted towards Business Stakeholders at https://www.intricity.com/videos. These videos are used in universities across the world. Here is a small set of universities leveraging Intricity’s videos as a teaching tool:

Talk With a Specialist

If you would like to talk with an Intricity Specialist about your particular scenario, don’t hesitate to reach out to us. You can write us an email: specialist@intricity.com

(C) 2023 by Intricity, LLC

This content is the sole property of Intricity LLC. No reproduction can be made without Intricity's explicit consent.

Intricity, LLC. 244 Fifth Avenue Suite 2026 New York, NY 10001

Phone: 212.461.1100 • Fax: 212.461.1110 • Website: www.intricity.com